Those who follow me know that over the last year, there’s one technology that has been my obsession: Virtual Reality (VR), specifically the Oculus Rift. I was first introduced to the headset that eventually became Oculus by Emblematic Group’s Nonny de la Peña, and I have gone on to work as a consulting producer for a piece of VR journalism at Gannett (see Harvest of Change) and also started a new Virtual Reality Storytelling class at Newhouse. I continue to believe that VR is a big part of the future of journalism and media, but it doesn’t end there.

The HoloLens wearable visor. Image courtesy of Microsoft.

I’m actually surprised to be able to say that a mere two years later, another technology has come along that also has the potential to fundamentally change the way people interact with information. That technology is augmented reality, specifically the approach taken by Microsoft with its upcoming HoloLens wearable device. I had the privilege of spending about an hour with HoloLens at the E3 Electronic Entertainment Expo, and it’s already changing the way I think about both VR and the larger field of what I call Experiential Media.

What is Augmented Reality?

First, I need to explain a couple of terms. Virtual Reality (VR) and Augmented Reality (AR) are close cousins, but they’re unique in design and approach. Like VR, AR is about seeing and interacting with computer generated information through a wearable headset, but that’s where the similarities end. VR takes you out of your current environment and tricks your brain into thinking you’re somewhere else, while AR puts information into the world you live in. For this reason, people who get paid much more than I do to explore new technologies predict that AR could be a significantly larger market than VR.

Until now the most well-known AR device was Google Glass, a noble but limited first attempt at consumer AR that has since been put on hold. This was partly because of the social stigma of wearing an obvious computer (likely also to be a challenge for Hololens), and the impact that had on social dynamics (“Are you filming me?”). But I think another reason was that Glass merely covered one tiny corner of your visual field of view, forcing you to look into the upper right to see notifications, pictures and videos.

HoloLens has a much larger field of view, as well as superior visual processing that makes the images it projects appear to be in your environment. This includes depicting them at the proper distance — whether right in your face or all the way across the room.

First Stop: the Halo 5 HoloLens Demo

Thanks in large part to Newhouse Television, Radio and Film alum Lary Hryb, now “Twitter famous” as the Major Nelson fan evangelist for Xbox, I was able to spend about an hour with HoloLens in three separate demos. While it wasn’t perfect, I was floored by just how well the device worked and also how robust it was. While weighing less than a pound, it apparently has a full install of the upcoming Windows 10 that has built-in “holographic processing.”

I walked away with a huge grin on my face and felt like HoloLens was a truly magical device. Just as in the demos on the HoloLens web site, it populates your world with fully interactive 3D models, characters and pretty much anything you can imagine in 3D. You can walk around a large physical space and see other stuff inside it that appears to interact with the same physical objects as you do, and you can interact with them by simply moving around, or in some cases, “air tapping” to select and zoom. Think of any movie where the characters see and interact with ghosts, where they clearly see people and objects that others around them don’t–that’s what it’s like. It does this so well that I now understand why Microsoft has invented new terminology to describe the effect. They call it mixed reality.

The first demonstration was part of a special preview of the video game Halo 5, and it was made available to a large number of people who were willing to stand in a line at the E3 conference. We were escorted into a room that was physically designed to look like a briefing room in the game and fitted with our headsets. Part of this included having my pupil width measured so that it could be dialed into the device (my number was 61). They then had me sit on a chair facing what appeared to be a blank, black screen. Once they put it on my head, they asked me if four boxes were lining up. I didn’t see anything at first so i asked them to readjust the visor, and then — bam! Where I’d previously seen a blank screen, I now saw a 2D welcome message in light that appeared to be painted onto the screen, just as if I were looking at a flat screen TV.

Next, they asked me to please stand up, turn to the right, and follow the arrows. This is where things started to get trippy. At the end of a dark tunnel, I saw a very bright, floating and pulsating arrow way off in the distance. As I walked toward it, it grew closer right in line with wall that was at the end of the corridor. A grin spread across my face as I practically walked right into the arrow. It looked so real that I tried reach out and touch it (which did not work — my hand appeared to be behind it even though it should have been in front).

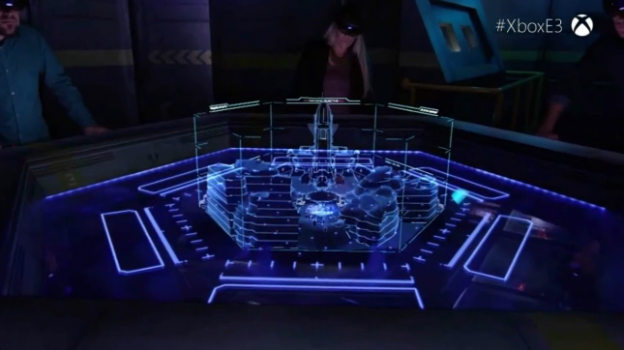

No matter, I turned right and was guided by more floating arrows to find my place at a round table. My initial thought was that it looked like the briefing table in Return of the Jedi, where a 3D view of the second Death Star appeared. Sure enough, once everyone was around the table, we saw a fully three-dimensional map of a level in Halo materialize right in front of our eyes. Then a robot appeared who was talking to and gesturing to different people around the table.

At this point, my journalistic skeptic filters turned on, and I started to move slightly to the left and right to see if the perspective changed. The image didn’t move from its spot in the middle, but it did disappear to the right if I moved my head too far to the left. I even crouched down and looked at it from below, and it still appeared as if it were physically on the table. So much for breaking the illusion. (Side note: I had no way of telling if everyone had their own unique view of the 3D map, or if we were all seeing the same thing with the same perspective.)

This is when I noticed the biggest shortcoming of HoloLens: it’s small field of view. If you put your hand out vertically in front of your face about 3 inches from your nose, that’s about the height and width of the “screen” that displays holograms. This can be a little distracting at first, but what I saw in the field of view was so incredible that within seconds I had adapted and hardly noticed it.

What was also remarkable is that people on the other side of the table disappeared behind the virtual 3D images projected by HoloLens, significantly adding to the illusion that these 3D objects were physically in the room with us. Some of these objects were solid, while others were line drawings. In some cases, I could see the faint outline of the physical person on the other side of the solid holograms, but not noticeably so.

Project X-Ray

My next two demos were private, and from what I understand, very few people have been given access to them yet. They were a full-room game called Project X-Ray and the HoloLens version of Minecraft (purchased by Microsoft last year) that was shown at the Xbox briefing the day before.

X-Ray seemed to be designed to showcase the device’s 360-degree range of experience. I was in a room with nothing in the middle save for a couple couches on the sides. After donning the HoloLens, a crack appeared in the wall in front of me and a burrowing robot stuck its head through. Then, out of the robot’s mouth came a few smaller robots who started to swarm around me and shoot very large bullets. I was told I could dodge the bullets, which I did to reveal them whizzing off to the right and left of my head. I could also shoot them. If you can imagine the scene in The Matrix where Neo is dodging slow-moving bullets, this is close to what it was like — except the bullets looked real.

The last feature of this demo was the ability to look virtually behind the walls by turning on X-Ray, which I did by pulling the left trigger on a wireless Xbox controller. I could then see through the walls (virtually of course), and see the little squiddies moving between imaginary two-by-fours.

Holographic Minecraft

The final demo was of a special HoloLens version of Minecraft. You can see an approximation of what I saw in this video from Microsoft. With the exception of the much narrower field of view, everything in it worked the way depicted with the Minecraft world spawning directly on the table and occluding real objects behind the virtual ones. But there’s one aspect I saw that got me thinking more than any other, and it Illustrates the power of the so-called “holographic processing unit” on the device.

This image from Microsoft approximates what a person wearing HoloLens sees when playing a new version of Minecraft in development for the device.

When we started the demo, I was told to look at the wall and say “Show world.” Something like a flat-screen TV appeared that depicted a typical Minecraft screen in 2D. This was similar to the demos of Netflix projected on any wall that are part of the HoloLens marketing video. I found this interesting, but not particularly compelling.

But then the developer issued a spoken command that caused that view to change from 2D to 3D, and the effect was magical. That “screen” now looked like a portal into another universe in the next room that was fully 3D. I was told I could walk right up to it to look inside, and I did. I must have looked like an idiot standing right up against a blank wall, but to me it wasn’t blank. As I got closer, I could actually see further into the 3D world at an angle to the left or right. It was like that scene in Who Framed Roger Rabbit where a wall is destroyed and you see Toon Town on the other side.

For film fans, this also means that you could be sitting on your couch, in a train station, or in a classroom and spawn a large screen, then load up a 3D movie like Avatar or The Lego Movie. If I were in the screen business, I would seriously consider what this could do to my business in the near future.

And, Yes, Some Challenges

I have already mentioned the narrow field of view, an issue I consider at present to be a minor annoyance, but it could be distracting enough to limit adoption or repeat usage by some purists. This is most noticeable when you’re moving freely through a 360-degree space, but if you’re sitting and standing in front something and looking forward, or working on 3D objects at a desk, you hardly notice it.

Another challenge I suspect (but cannot yet prove) is light. Every demo I was in took place in a darkened room, with walls that were mostly painted black. One room had a few lighter colored pictures on the wall, and I did try to see if the light-colored objects moving around me looked different against the light-colored paintings. Forgive me if I was a little distracted because of flying robots trying to take me out, but I remember being able to see one of the flying robots in front of the light background with perhaps a little bit of the transparency.

What I wasn’t able to try was viewing an opaque virtual object against a light physical background without the object appearing washed out, or the ability to see real-world objects behind the virtual object. From what I understand, doing this would require a major discovery in optics and is considered the holy grail of full AR. However, from what I experienced the inability to do this isn’t a show-stopper and could be a point of differentiation for future AR devices, such as Google-backed Magic Leap which has yet to give a demo to anyone other than a handful of investors. It may, however, mean that using HoloLens in broad daylight may not be feasible just yet. This is something to watch as Microsoft becomes more public about the particulars of the device.

Opportunities for Journalism and Media

I almost hesitate to share these ideas, not out of fear of someone else doing them (execution is everything), but out of fear of embarrassing my future self for thinking too small. As with VR, I have this sense that AR is its own unique medium. People in communications fields need to resist the urge to simply shovel stuff that worked in PC, gaming and mobile, and assume it will work as well in AR. Since AR takes place in the physical world, I almost wonder if we should be looking to the pre-PC analog world for inspiration. If history is any guide, everyone who ventures into this new space will make mistakes that they can laugh at later. That’s what makes it fun.

AR TRAVELING EXHIBITS: My first idea is incredibly practical. In my fall and spring Virtual Reality Storytelling classes, I want to see what the students can do to create both VR and AR experiences with the same content. I’ll provide one student project as an example. One of my students, Sawyer Rosenstein, created a virtual space museum that takes you through the history of NASA and spaceflight. In VR, he had to build the walls and corridors, but the objects in it could just as easily be projected into a real museum as part of an AR exhibit. I think this concept in general could be very powerful for museums, especially museums that procure very delicate, unique items that they would like to send off on a tour, but can’t in order to protect them. With something like HoloLens, could we see future exhibits travel as bits rather than atoms?

STORY RE-ENACTMENT IN PHYSICAL SPACE: Assuming the HoloLens can be used in any light conditions, could it be possible to virtually re-enact famous historic scenes in the places where they actually occurred? Imagine being at the JFK shooting and walking through the scene in Dallas to examine evidence, or being virtually present for the tearing down of the Berlin Wall (or the construction for that matter) at the actual place where it first came down. Journalists and documentary filmmakers will have a ball with this.

DISTANCE LEARNING: Online education is currently centered around the webcam, with teachers and students seeing each other in a grid and communicating through voice and text. Replace that webcam with a 3D scanner like the Kinect (which apparently HoloLens has inside it), and you could be sitting in a blank room but be virtually surrounded by the students and professor. Of course, this could also be done with VR, but I fear that in the near term spending 80 minutes inside a VR headset will inevitably become claustrophobic and induce simulation sickness. Simulation sickness isn’t an issue with AR because what your eyes see tracks with your inner ear, and you would also be free to access physical aids in your environment such as your computer keyboard, or a pen and paper to take notes.

IN-ROOM GAMES AND MOVIES: One of the hottest plays in New York City right now is Sleep No More, a re-imagining of Shakespeare’s Macbeth in a Victorian mansion. As you walk through the house, actors are in each room playing out a scene in which they sometimes involve you. I could imagine this same approach being taken with future movies and story-driven games, where you walk through your own house to find virtual characters acting out scenes which may even involve you.

Think of the full range of possibilities in the physical world, then add in interactivity, and there’s an almost unlimited range of possibilities for this technology. Even though HoloLens has no launch date, I think it’s compelling enough for journalists, filmmakers, ad agencies and anyone who deals with information to begin thinking about how they will use it. The future of information may very well be in our physical world, augmented with holograms.

Dan Pacheco is the Peter A. Horvitz Chair in Journalism Innovation at the S.I. Newhouse School at Syracuse University. Currently a professor teaching entrepreneurial journalism and innovation, he is also an active entrepreneur and CEO of BookBrewer, which he co-founded. He’s previously worked as a reporter (Denver Post), online producer (Washingtonpost.com, founding producer), community product manager (AOL), and news product manager (The Bakersfield Californian). His work has garnered numerous awards, including two Knight-Batten Awards for Innovation in Journalism, a Knight News Challenge award in 2007 (Printcasting), and an NAA 20 Under 40 award (Bakotopia). At last count, he’d launched 24 major digital initiatives centered around community publishing, user participation, or social networking. Pacheco is a proponent of constant innovation and reinvention for both individuals and industries. He believes future historians will see today as the golden age of digital journalism, and that its impact will overshadow current turmoil in legacy media.

People were imagining and dreams for thousands of years. Then the scripts came, which started to put them in writing as stories or biographies or fables. Then they started with Dramas which evolved into movies and videos.

This is the next step in revolutionary evolution to sell or show or dazzle others with some one’s dreams or thoughts or ideas or … in 3D.

Barriers broke between realities!!! Dreams and visions and unknown scenarios … Crazy ideas!!!

People were imagining and dreams for thousands of years. Then the scripts came, which started to put them in writing as stories or biographies or fables. Then they started with Dramas which evolved into movies and videos.

This is the next step in revolutionary evolution to sell or show or dazzle others with some one’s dreams or thoughts or ideas or … in 3D.

Barriers broke between realities!!! Dreams and visions and unknown scenarios … Crazy ideas!!!

Watching the NBA game at home in a selected stadium seat. The whole game will be at your feet in 3D as if you are watching from the that seat. Of course, they can sell the stadium premium box seats for a premium price. MS has a studio with 100 cameras to record the objects in 3D for this scenario.

Watching the NBA game at home in a selected stadium seat. The whole game will be at your feet in 3D as if you are watching from the that seat. Of course, they can sell the stadium premium box seats for a premium price. MS has a studio with 100 cameras to record the objects in 3D for this scenario.