The following piece is a guest post from Elizabeth Stephens, an assistant professor at the University of Missouri School of Journalism. Stephens is also leading a DigitalEd online training on audience analytics on November 30. You can register for it here.

When a story from ColumbiaMissourian.com was picked up by The Drudge Report, the bump in pageviews was obvious. The story about a Trump piñata in St. Louis during the second presidential debate quickly became the site’s most-read story ever.

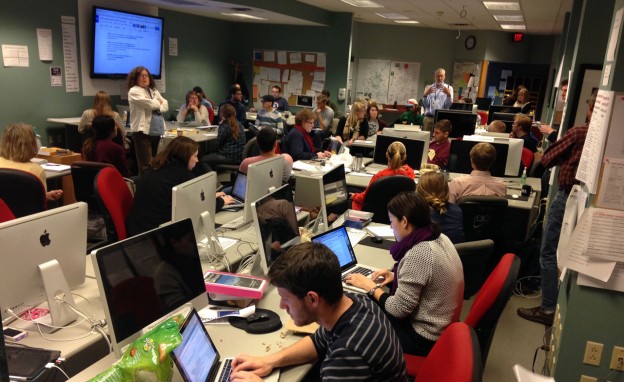

As soon as the alerts from Chartbeat came in, we knew something had happened. We quickly found the source of the bump through our analytics platforms, and then we dove into comment moderation. The story stayed at the top of our “big board” in the newsroom with four-digit concurrent visitors over several days, and it was at the center of our analytics report for the week.

Those big stories are obvious, but what about the stories that never make the “big board?” Do you track the lesser-read stories? How do you decide whether a story has performed well?

Flickr Photo by Blue Fountain Media and used under a Creative Commons license.

Smarter editorial analytics

When discussing analytics, it’s easy to gravitate to the big numbers and highlight the success stories. But at the Missourian where the mission is serving the community, our goal is not to write stories that will go viral on The Drudge Report. We serve the community best through stories about city government, the university, local schools and sports. The piñata was a one-off success that had more to do with luck than strategizing on our part.

When we study analytics with our student journalists, understanding what resonated with our audience — and what didn’t — is more important than identifying the most-viral story. The Missourian has been sharing analytics reports with the newsroom since 2009. They’ve been revamped several times, and we recently took another look at what we’re sharing with our staff.

As we started digging into our reports, we realized we didn’t have a baseline for comparison. We typically picked out three stories to analyze based on pageviews — some for high numbers and others for low numbers — but we couldn’t say how those numbers fit in with the history of our site. We couldn’t definitively say what our averages and medians were for pageviews and visitors.

To solve this, we assessed the top 500 stories for the previous months to provide a baseline analysis. We were able to look at each quintile and determine how types of stories performed. It provided a baseline not just for numbers overall but for expected performance on types of stories such as sports features, university news and spot news.

For example, when we publish a feature on the football team, we can judge how it performed against previous football features. If it didn’t perform well, we can take a look at what happened with social sharing and display on the site.

Now we are able to compare apples to apples and provide a holistic analysis for how a story compares to other similar stories and the site overall. But we have to go beyond just reporting the numbers to actually acting on them.

The University of Missouri. University photo used here with Creative Commons license.

What are your audience analytics challenges?

How do those reports and numbers change the work we do? Should we try a new approach to writing a meeting story? Do we change the tone or approach of social sharing? Do we need to let something go? These are the questions we’re asking in our newsroom, and you should be too.

In a DigitalEd online training on Nov. 30, I will discuss ways to answer these questions. We’ll discuss how to establish your baseline, figure out what the numbers tell you, what action items you can move forward on, what you want to dive deeper into for testing and what to consider beyond the numbers.

Register for the online training and let’s talk about your analytics challenges!

Elizabeth Stephens is a news editor at the Columbia Missourian and assistant professor at the University of Missouri School of Journalism.