Inside the Oculus Rift headset, I’m acutely aware of my breathing. A farm landscape whizzes by as I swivel my neck, and then speed towards an icon with the push of a joystick. With a click, I’m on the back of a truck, staring down a herd of pixelated stampeding cattle. Is this journalism? Judge for yourself.

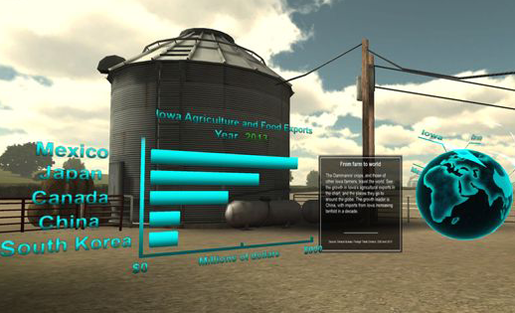

Produced by Gannett for Iowa daily the Des Moines Register, virtual reality project “Harvest of Change” is part of a larger five-part print series examining how economic and demographic shifts are reshaping the lives of local farm families. Attendees at the 2014 Online News Association conference (ONA14) lined up to immerse themselves in a first-of-its-kind tour of a southwest Iowa farm owned by the Dammann family. Embedded in the VR landscape are 360-degree video segments that surround viewers while they learn from the farm’s family and workers about agriculture in transition. (Learn more about how they built it.)

‘Cusp interfaces’

While “Harvest of Change” was among the flashiest demos featured at this annual celebration of bleeding-edge journalism, it wasn’t alone in breaching the membrane between the digital and the physical. Several panelists and exhibitors in the conference expo — dubbed the Midway — showed projects that break through the screen or page in unfamiliar ways. Built for what I call “cusp interfaces,” such experiments operate either by drawing users in, or reaching out to connect with them in real life. They are also often marked by a malleability of purpose — serving to both capture and serve up story elements. Categories include:

Wearable rigs: The recent announcement of the Apple Watch goosed excitement about such devices, which include wristbands, pendants, and gloves. Wearable headset Google Glass was also on tap at the Midway for users to try, and featured prominently in a panel presented by USC journalism professor Robert Hernandez.

A self-described “hackademic,” Hernandez has been working with students to retrofit the headsets for reporting purposes — for example, creating an editing queue so that corrections can be made before content is shared. “What we’re trying to do,” he said, “is be proactive about this disruption.” This is not merely an academic exercise. Newsrooms such as Boston’s WBUR are already developing “Glassware.”

Wearables were at the top of the 10 trends for journalists flagged in futurist Amy Webb’s popular annual ONA presentation. As of September 18, she says, she’s tracking 253 devices, as well as related concepts such as “publishing for glance,” a term coined by content strategist Dan Shanoff to describe “a new class of ultra-brief news” to be served up on wrist displays.

Augmented artifacts: While wearables communicate intimately to users through gestures such as taps and barely audible buzzes, apps such as ARchive allow stories to be hidden in plain sight in public spaces, accessible only via mobile phones. Hernandez worked with his students to augment the LA Library’s central branch, allowing app users to point their phones at physical landmarks such as a statue’s clay torch in order to trigger display of related footage and photos.

At ONA14, he had panel participants load up the Re+public app to interact with slides of murals in selected cities. Suddenly, one mural came to life, with turning gears and flashing TV screens. Half of the panel attendees jumped to their feet, standing on chairs, laughing and vying to point devices at the screen.

While, as Hernandez observes, these murals aren’t journalism, the experience was notable. Using devices to add new properties to familiar physical objects smacks of magic, and turns what could be a simple interaction on a screen into a memorable moment. As Josh Stearns of the Geraldine R. Dodge Foundation writes in a recent MediaShift piece, “Hands-on journalism doesn’t privilege the physical over the digital, but instead recognizes how the two can work productively together.”

Hands-free headlines: Of course, sometimes you’d rather free your fingers from the keyboard. That’s where new motion-controlled controllers come in. In a session provocatively titled “The Holodeck is Real!” Steven King — an assistant professor of innovative and interactive media at UNC — demonstrated the Leap Motion controller. Plugged into a PC, the device allows users to surf selected news sites through waving, pinching and tapping the air above it.

King says he’s working to answer the question: “How do we meet and tell stories with new technologies as they’re arriving?” However, the uses for this functionality are still not quite clear. While his informal post-panel Leap demo wowed with manipulation of a virtual 3D skull, it fell flat when he showed scrolling between articles. Still, last year’s announcement of a New York Times Leap Motion app generated headlines, and holds out hope that more breakthrough uses of this intriguing interface will be discovered.

Sensor stories: While controllers such as the Leap and Kinect sense motion, journalists are developing storytelling applications for all kinds of sensors, including those that can detect toxins, speed, distinct sounds, temperature, light, and more.

In June, Angela Washeck wrote for MediaShift about the “Sensors and Journalism” report released by the Tow Center for Digital Journalism. At ONA14, I sat down with with the report’s author, Fergus Pitt, to ask what had happened in the wake of its release. He noted that the report had sparked conversation among journalists whose work overlaps with the citizen science sphere, such as Lily Bui, the executive editor of the SciStarter site. While journalists are still struggling with determining how accurate sensor-gathered data might be, crowdsourced sensor projects have proven valuable for audience engagement, said Pitt. He observed a particular uptick in projects involving drones — for example, see this drone footage below of the recent People’s Climate March, submitted anonymously to Democracy Now — and predicts even more of this type of reporting due to a recent Federal Aviation Administration decision clearing the use of drones by selected production companies.

No home runs yet

None of these experiments are home runs. Cusp interfaces are still works in progress. The Oculus Rift environment was a bit nausea-inducing, and taking a photo using Google Glass made me feel like I had double vision. Leap’s gestures seemed a bit like, well, a bunch of hand-waving, and Webb’s suggestion that attendees contemplate “glance journalism” prompted journalism professor Dan Kennedy to tweet: “I thought it might be parody…”

Still, just as these projects bridge the gap between the digital and the physical, they also straddle our known present and possible futures.

I catch a glimmer of this when Dan Pacheco, who holds a chair in journalism innovation at Syracuse University, offers to take a 3D scan of my head. Pacheco helped produce “Harvest of Change,” and is on the Midway to demonstrate how an iPad-powered scanner called the Structure Sensor can be used to build the 3D objects and characters featured in the VR production.

Self-consciously, I stand as he walks around me, scanning from all angles. He explains that he could use this to 3D-print a physical model of me — but that I should be careful not to check the box that would make my image free for use across the Web, lest I end up in a 3D porno. Suddenly the Midway drops away, and I’m there for just a moment, immersed in a future where that might become a common concern.

These are the places that cusp interfaces can take us. Looking glass, here we come.

Jessica Clark is the founder/director of Dot Connector Studio, a Philadelphia-based multiplatform strategy and production agency. She’s also the research director of Media Impact Funders, a knowledge network for foundations that support public-interest media projects. Follow along as she tracks discoveries at the cusp of the physical and the digital over at The Revenge of Analog Tumblr. Learn more at jessicaclark.com, or find her on Twitter: @beyondbroadcast.